Federated Learning – IoT Anomaly detection

In this project, our researches simulated various attacks and defenses on Federated Learning (FL) applications with a focus on IoT Intrusion Detection Systems (IDS). We have extended the modeling of the behavior of IoT devices to a number of newer IoT devices to include common aspects necessary for the selection of anomaly detection algorithms.

Federated Learning is an attractive collaborative machine learning approach for us to apply to smart systems at large. Especially in the area of the Internet of Things, the potential for smart applications as well as smart systems is very extensive.

IoTDefender

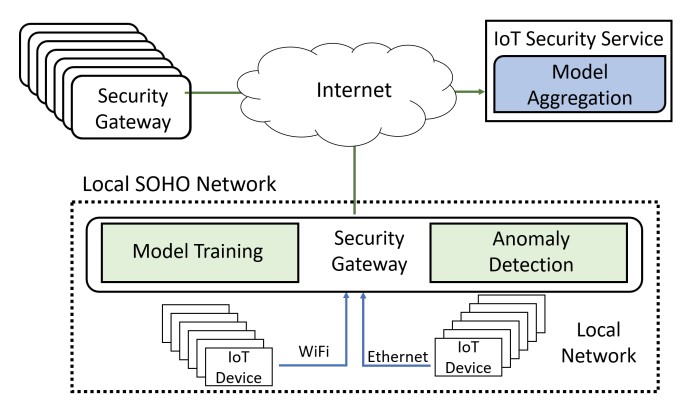

In the IoTDefender project we investigate possibilities of using a federated learning (FL) approach to realize a more adaptive and autonomous approach of training behavioural models for IoT intrusion detection. In this approach, deep learning-based neural network algorithms are used to learn the typical packet sequences that IoT devices emit when communicating over the network. Since most IoT devices have only a few different well-defined functions, we have shown that their behaviour can be accurately captured using such models.

However, if only local data would be used to train these models, obtaining accurate models would take considerable time, since many IoT devices typically do not send a lot of data but rather have scarce network communications. Federated learning offers therefore a very good approach to aggregate models trained by many system participants to a global model shared by all, thus improving significantly the speed and accuracy of model training and detection.

In this project we also consider the problem of data or model poisoning, in which an adversary uses, e.g., compromised devices in the network to inject maliciously modified data into the training process of the detection model in order to inject so-called backdoors into them. These backdoors can have the effect that, e.g., attack traffic is incorrectly classified as benign by the resulting detection models.

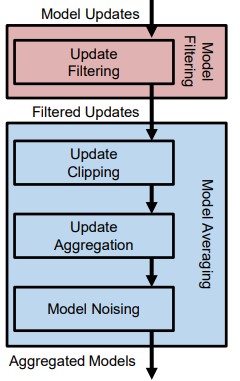

We have therefore developed a framework called FLGuard that provides a two-level approach for identifying potentially poisoned model updates and mitigating the impact that potential poisoning attacks can have on the resulting global detection models.

Related Publications

T. D. Nguyen, S. Marchal, M. Miettinen, H. Fereidooni, N. Asokan and A. Sadeghi, "DÏoT: A Federated Self-learning Anomaly Detection System for IoT," 2019 IEEE 39th International Conference on Distributed Computing Systems (ICDCS)